[AAAI 2022] FCA: Learning a 3D Full-coverage Vehicle Camouflage for Multi-view Physical Adversarial Attack

Overview

This is the official implementation and case study of the Full-coverage Vehicle Camouflage(FCA) method proposed in our AAAI 2022 paper FCA: Learning a 3D Full-coverage Vehicle Camouflage for Multi-view Physical Adversarial Attack.

Source code can be find in here.

Abstract

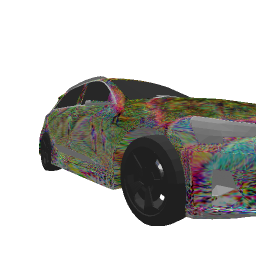

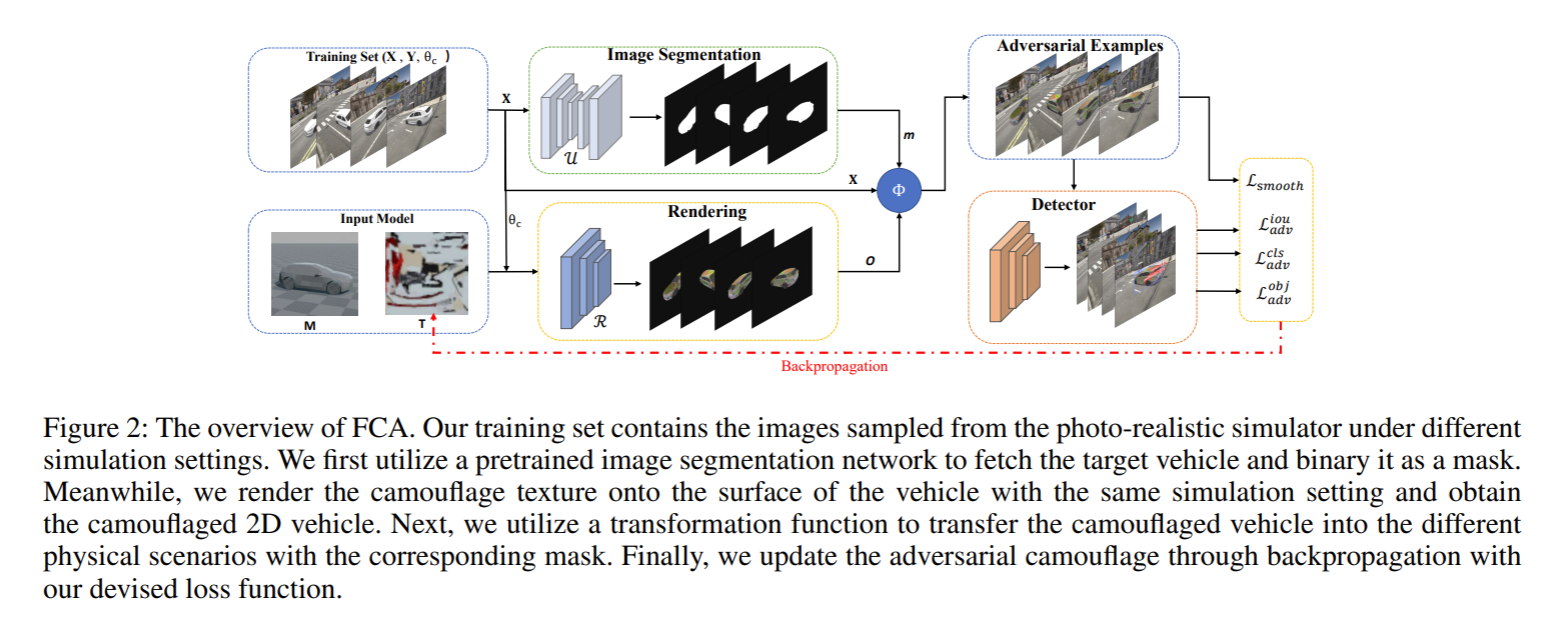

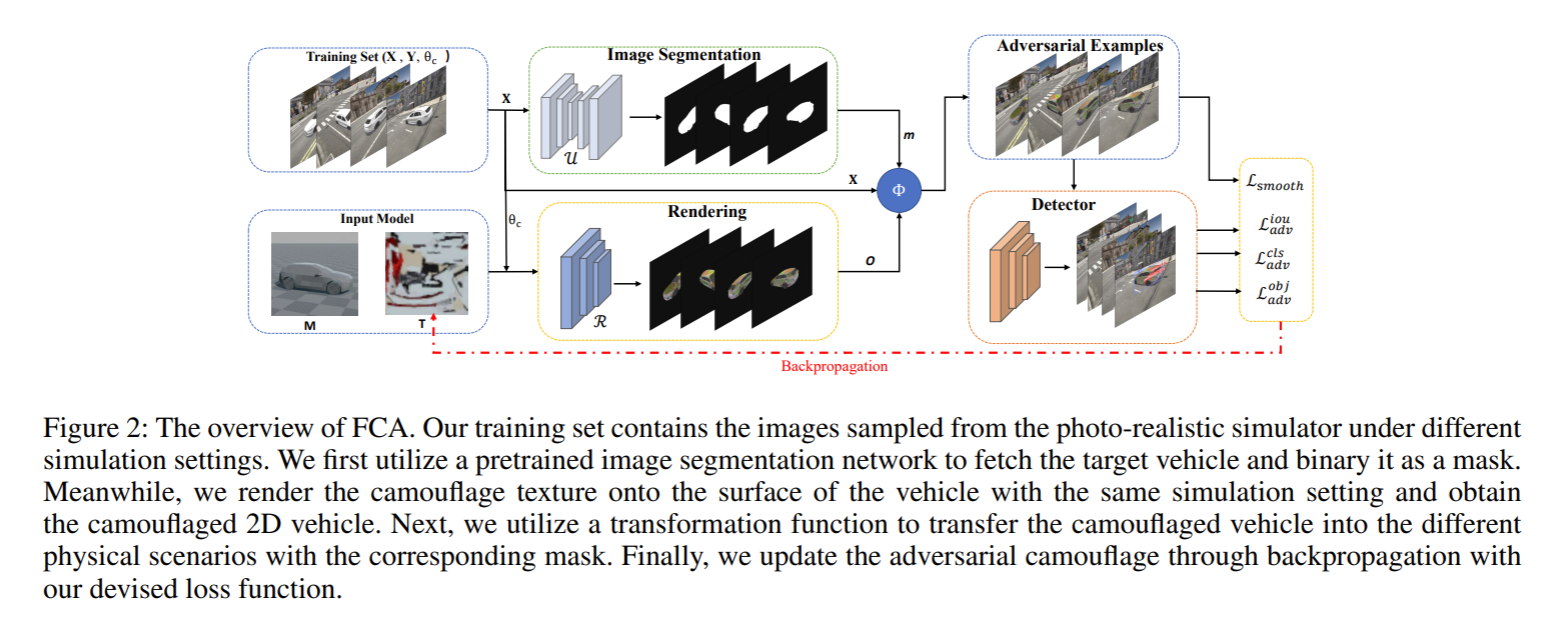

Physical adversarial attacks in object detection have attracted increasing attention. However, most previous works focus on hiding the objects from the detector by generating an individual adversarial patch, which only covers the planar part of the vehicle’s surface and fails to attack the detector in physical scenarios for multi-view, long-distance and partially occluded objects. To bridge the gap between digital attacks and physical attacks, we exploit the full 3D vehicle surface to propose a robust Full-coverage Camouflage Attack (FCA) to fool detectors. Specifically, we first try rendering the non-planar

camouflage texture over the full vehicle surface. To mimic the real-world environment conditions, we then introduce a transformation function to transfer the rendered camouflaged vehicle into a photo-realistic scenario. Finally, we design an efficient loss function to optimize the camouflage texture. Experiments show that the full-coverage camouflage attack can not only outperform state-of-the-art methods under various test cases but also generalize to different environments, vehicles, and object detectors.

Framework

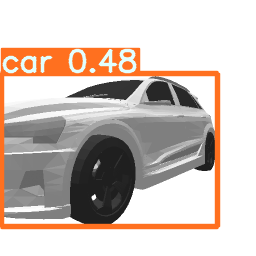

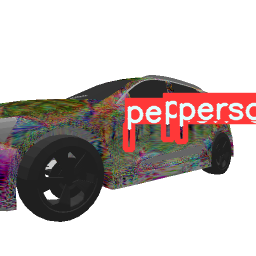

Cases of Digital Attack

Multi-view Attack: Carmear distance is 3

|

Elevation 0 |

Elevation 30 |

Elevation 50 |

| Original |

|

|

|

| FCA |

|

|

|

Multi-view Attack: Carmear distance is 5

|

Elevation 20 |

Elevation 40 |

Elevation 50 |

| Original |

|

|

|

| FCA |

|

|

|

Multi-view Attack: Carmear distance is 10

|

Elevation 30 |

Elevation 40 |

Elevation 50 |

| Original |

|

|

|

| FCA |

|

|

|

Multi-view Attack: different distance, elevation and azimuth

Partial occlusion

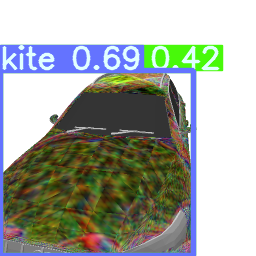

Ablation study

Different combination of loss terms

As we can see from the Figure, different loss terms plays different roles in attacking. For example, the camouflaged car generated by obj+smooth (we omit the smooth loss, and denotes as obj) can hidden the vehicle successfully, while the camouflaged car generated by iou can successfully suppress the detecting bounding box of the car region, and finally the camouflaged car generated by cls successfully make the detector to misclassify the car to anther category.

Different initialization ways

| original |

basic initialization |

random initialization |

zero initialization |

|

|

|

|

Cases of Phyical Attack